However, the possibility of carrying out more complex post-processing analysis, such as to study the time evolution of topological features, is limited by the second obstacle mentioned above, i.e., very high input/output (I/O) requirements. This standard procedure does not have a significant drawback when the intermediate datasets are small, as in cases when only time-independent statistics are retained. Because the software employed for the actual simulation is not equipped with tools for post-processing, the typical workflow followed by CFD researchers requires to externally store intermediate datasets, which are the input for further analysis. Nek5000, which we consider in this work, is one of such codes. In particular, CFD codes are often limited to the solution of partial differential equations and do not provide data analysis or visualization tools. This approach results in software packages that, although they often employ sophisticated numerical strategies, are relatively simple and can be used efficiently on a large number of cores. To mitigate the first difficulty, researchers have focused on developing codes with high strong scalability, which requires minimizing communication and load imbalance between nodes, as discussed, e.g., by Merzari et al. and Offermans. Carrying out these studies is challenging for two reasons: on the one hand, because of computational costs of the order of multiple millions of CPU hours, and on the other hand, because the datasets created by each simulation can be as large as tens of Terabytes. A relevant example is the DNS of the flow around a wing profile in, which employed \(2.3 \times 10^9\) grid points. In the case of turbulent flows, due to the large-scale separation in both space and time, such an approach results in computational meshes which may contain between \(\approx 10^6\) and \(\approx 10^9\) grid points, and simulations which proceed for \(\approx 10^6\) time steps. In the context of computational fluid dynamics (CFD), we consider both direct numerical and well-resolved large-eddy scale-resolving simulations (DNS and LES, respectively) as high-fidelity simulations, in which most of the independent degrees of freedom of the system are resolved explicitly, without the aid of modeling. This type of flow is ubiquitous in nature as well as industrial applications, and it plays a crucial role in phenomena as diverse as atmospheric precipitations and the creation of the lift and drag forces acting on aircraft. The availability of high-performance computing (HPC) resources and efficient computational methods allow the study of complex turbulent flows via time-dependent high-fidelity numerical simulations. In general, the result of this study highlights the technical challenges posed by the integration of high-performance simulation codes and data-analysis libraries and their practical use in complex cases, even when efficient algorithms already exist for a certain application scenario. In our case, better scaling and load-balancing in the parallel image composition would considerably improve the performance of Nek5000 with in situ capabilities.

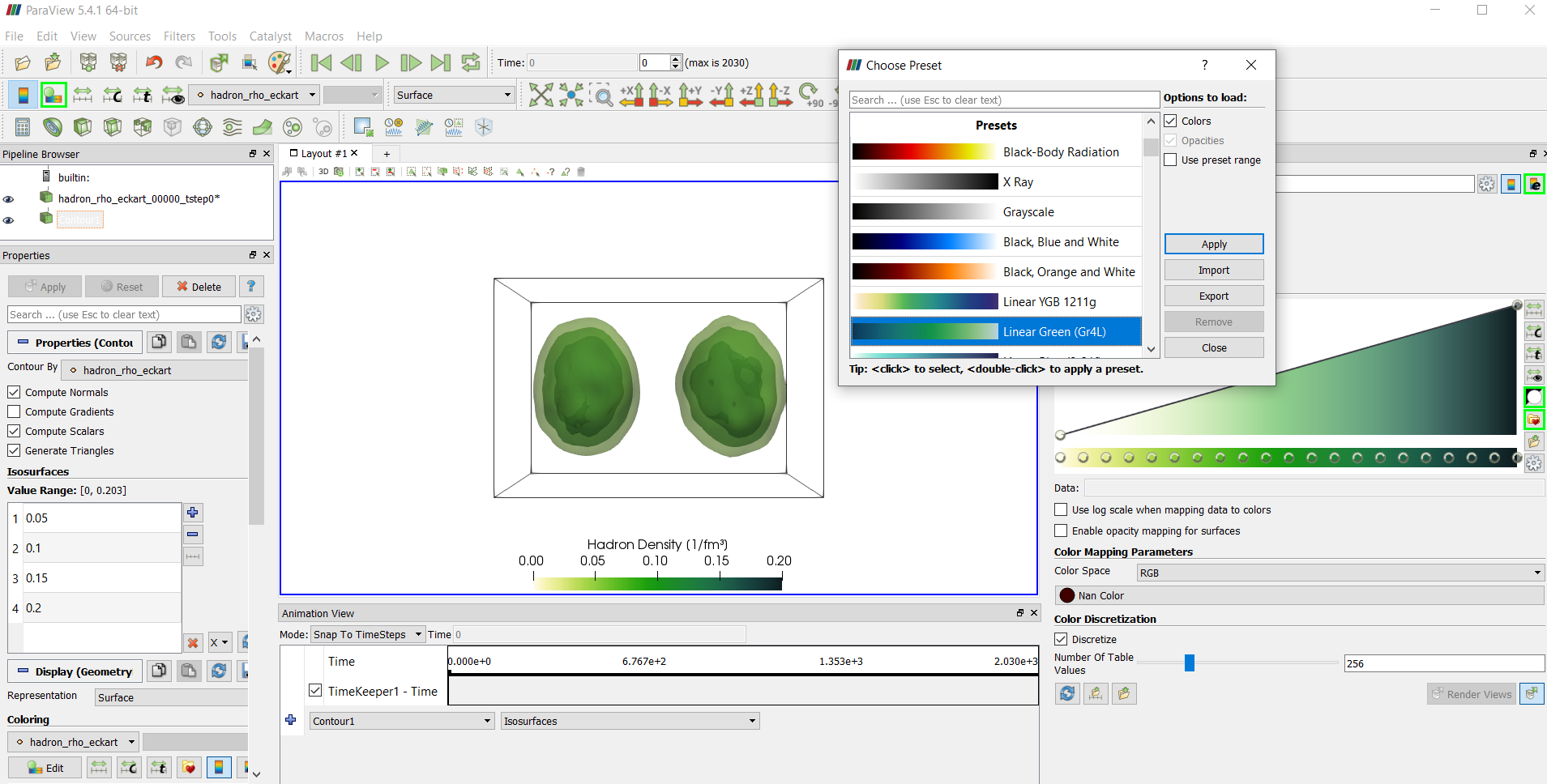

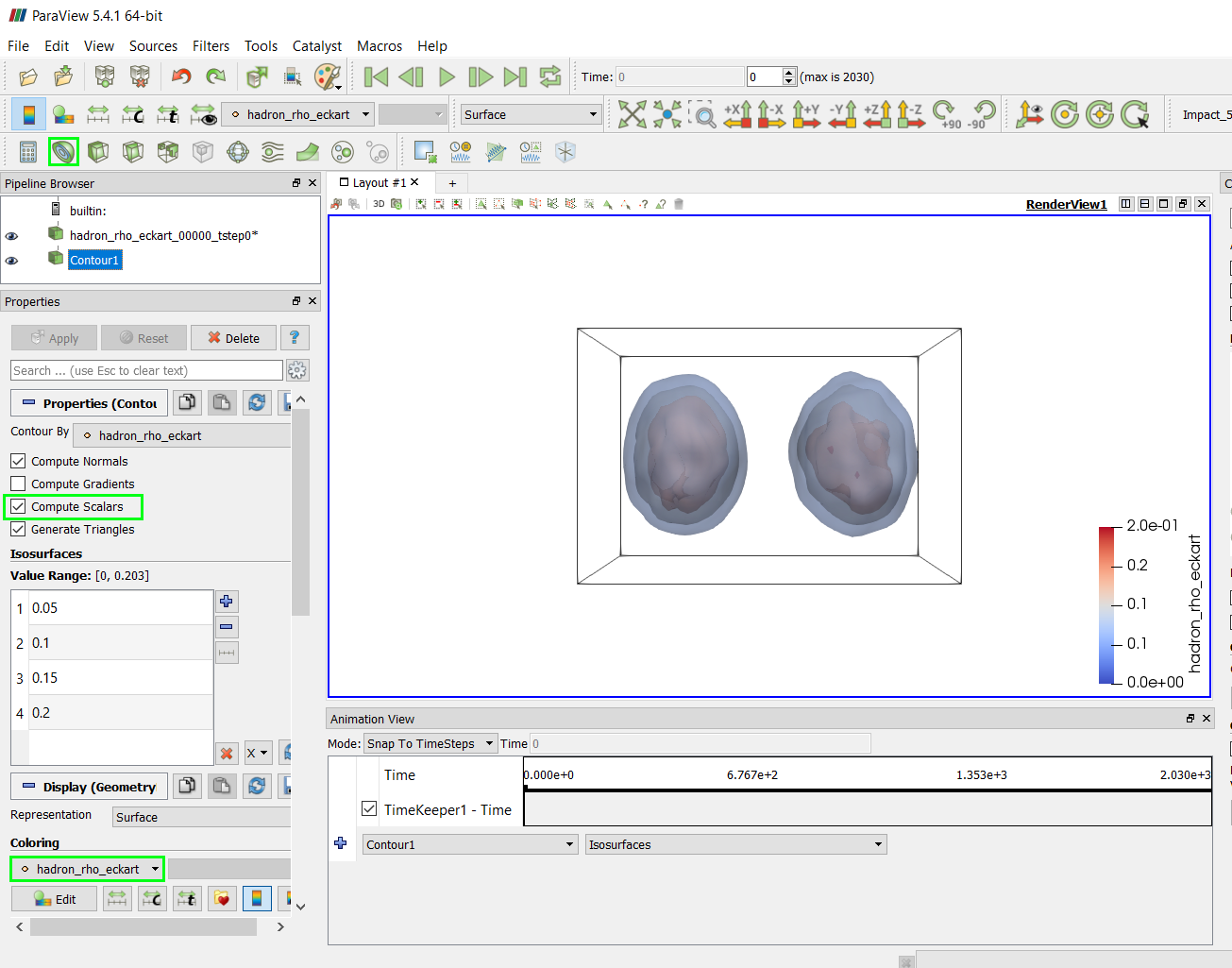

We also identified an imbalance of in situ processing time between rank 0 and all other ranks. Through profiling with Arm MAP, we identified a bottleneck in the image composition step (that uses the Radix-kr algorithm) where a majority of the time is spent on MPI communication. In our study case, a high-fidelity simulation of turbulent flow, we observe that in situ operations significantly limit the strong scalability of the code, reducing the relative parallel efficiency to only \(\approx 21\%\) on 2048 cores (the relative efficiency of Nek5000 without in situ operations is \(\approx 99\%\)). We perform a strong scalability test up to 2048 cores on KTH’s Beskow Cray XC40 supercomputer and assess in situ visualization’s impact on the Nek5000 performance. We develop an in situ adaptor for Paraview Catalyst and Nek5000, a massively parallel Fortran and C code for computational fluid dynamics. In situ visualization on high-performance computing systems allows us to analyze simulation results that would otherwise be impossible, given the size of the simulation data sets and offline post-processing execution time.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed